Biography

I would describe myself as an endlessly curious, ambitious, and creative person. In all my work, I begin by identifying the most important goals and questioning any built-in assumptions. This first principles approach frequently leads me to unique solutions which span multiple levels of hierarchy. I’ve made contributions in many fields including generative AI, robotics, biomedical imaging, computer vision, hardware acceleration, and computer architecture.

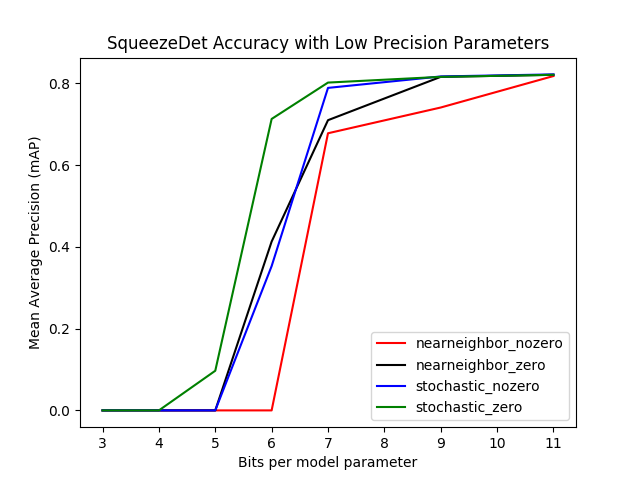

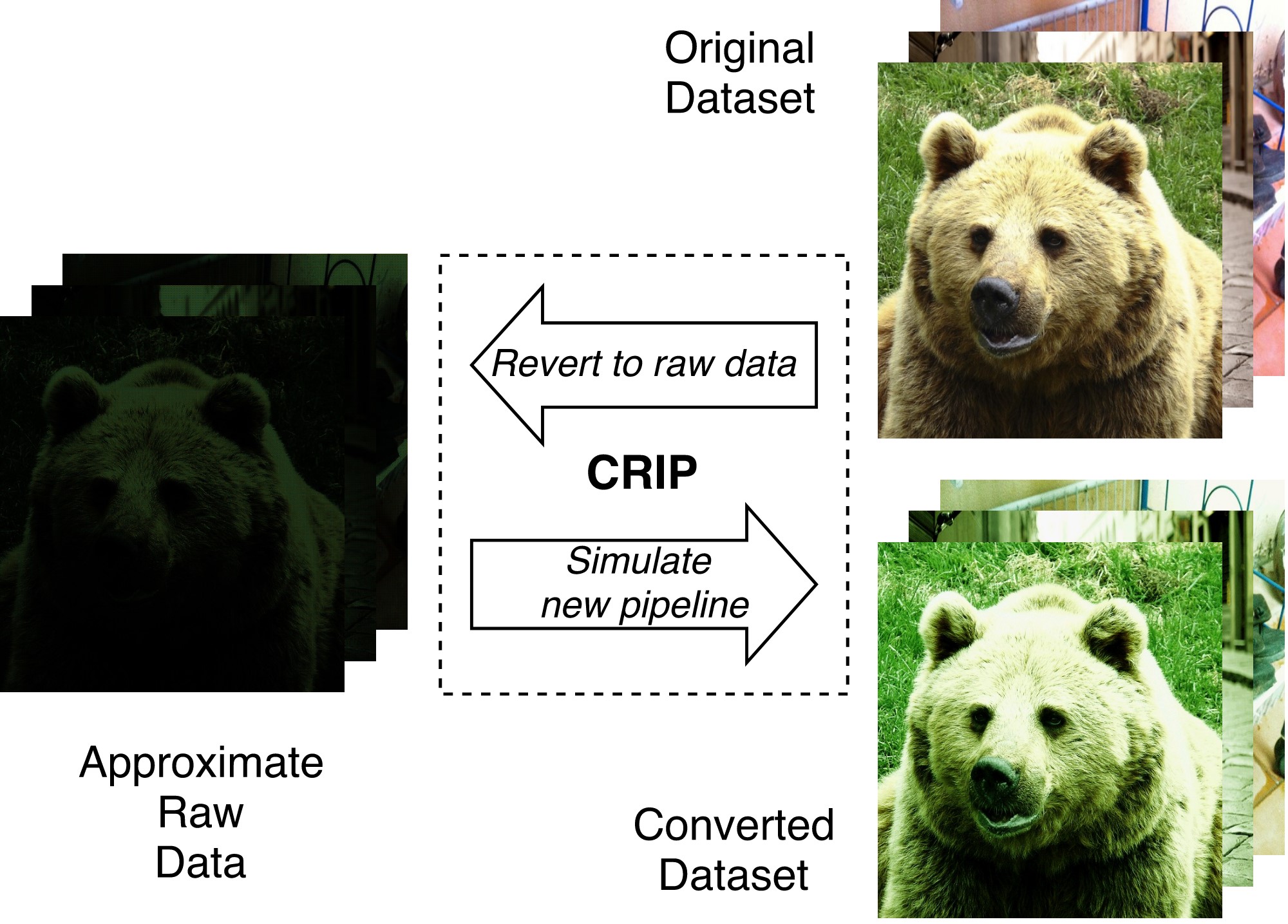

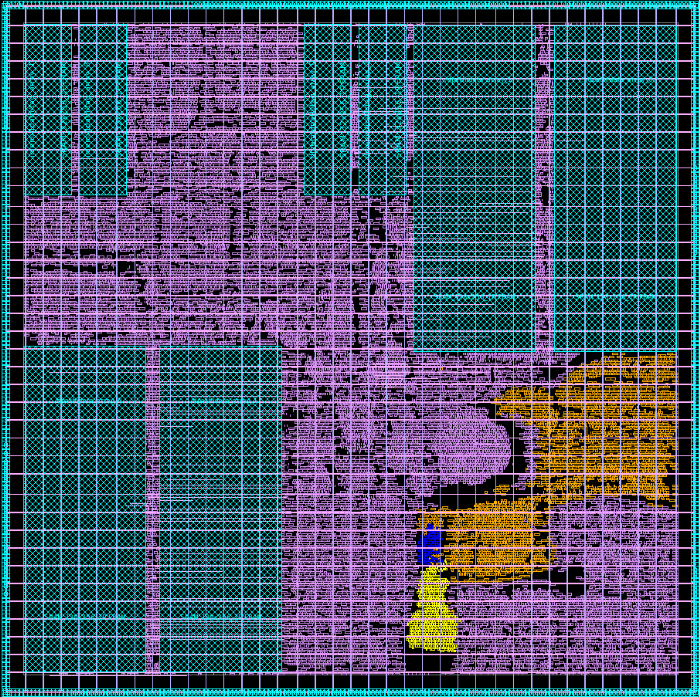

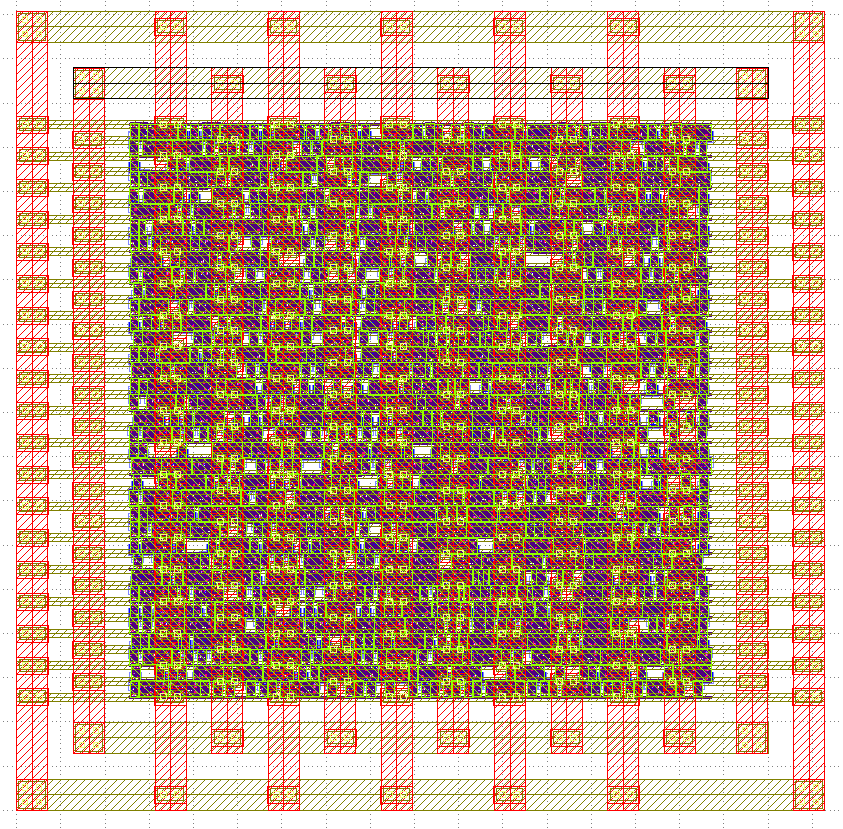

My passion for AI, deep learning, and hardware acceleration was super-charged during my PhD in Prof Adrian Sampson’s research group at Cornell University. My thesis focused on hardware-accelerated deep learning for computer vision.

My entrepreneurial spirit has led me to found three companies so far: Firebrand Innovations which sold videoconferencing IP, Codex Collective which produces electronic music events in Seattle, and most recently Soundry AI which provides an AI text-to-sample generator for music producers & songwriters. In my free time I enjoy writing music and DJing.

Interests

- Generative AI

- Deep Learning

- Computer Vision

- Computer Architecture

Education

-

PhD in Electrical and Computer Engineering, 2019

Cornell University

-

M.S. in Electrical and Computer Engineering, 2014

University of Massachusetts, Amherst

-

B.S. in Electrical Engineering, 2012

Rensselaer Polytechnic Institute